A data scientist's guide to stress-free product scraping

It’s one of the fastest-growing job categories in business - data scientist positions are expected to swell 34% by 2034.

Retail and e-commerce are embracing data science like no other industry because, in a competitive environment, price analytics has become a needle-mover.

As a data scientist, your job is to find patterns, build models, and generate insights. To do that, you first need to reliably acquire web data. Competitor pricing, product specifications, consumer reviews - you name it, data scientists need it.

The reality for data-gatherers, however, is that you spend 80% of your time scratching your head at 403 Forbidden responses, managing proxy pools, and angrily rewriting broken selectors when a target website adds a new field and changes up its CSS.

But the days of simply firing off a GET request and peacefully parsing DOM elements are now largely over. The modern web is hostile to automated scripts, and traditional scraping playbooks are fundamentally broken.

Let’s look at why the classic approach now fails, the hidden costs of modern workarounds, and how you can get back to actual data science using automated extraction.

Beautiful Soup vs. the modern web

Let me show you the traditional approach to web scraping. It's the first thing you learn in a web data tutorial: you grab the requests library to fetch the page and BeautifulSoup to parse it.

Here is what that looks like:

You expect to get a 200 OK response and a neatly formatted product name. Instead, you get a 403 Forbidden and a title that says "Just a moment..."

Welcome to the brick wall. Your script didn't fail because your code was wrong; it failed because the server looked at your fingerprint. You didn’t present a browser fingerprint, and because you didn’t render the page with JavaScript you were instantly categorised as a bot.

Down the rabbit hole

To get past the 403s, you’ll typically start looking for ways to patch up your script.

Proxies and infrastructure: You realise your IP might be being blocked, so you look into residential proxies. You quickly discover you have to build complex rotation logic, handle retries, and manage bans manually.

Headless browsers: Because the site relies heavily on JavaScript to render the actual product price, requests aren't enough. So, you spin up Selenium or Playwright. Suddenly, your resource consumption skyrockets, and your scraping speed plummets to a crawl.

HTML parsing: Even if you successfully manage the bans, you still have to write custom parsers for hundreds of different e-commerce layouts. And the moment a site updates its UI, your scraper breaks again.

LLMs aren’t as helpful as you’d think: You decide to feed the raw HTML into an LLM to extract the data. It works to a degree, but there are context size problems, you can’t verify the data quality, and when you calculate the token costs for 100,000 product pages you realise you’ll likely need a second mortgage.

Why bandwidth pricing is a trap (and why proxies aren't enough)

Let’s be honest, web scraping isn’t free anymore and to get data at scale, proxies are mandatory. But there is a massive misconception that simply buying a proxy pool will solve all your problems.

Traditional proxy providers charge by the gigabyte (ie, for bandwidth). This sounds fine until you realise what you are actually paying for:

Failed requests: When a site throws a CAPTCHA or blocks your IP, you still pay for the bandwidth of that failed response.

Retries: If it takes your scraper 10 attempts to successfully fetch a specific product page, you are paying for all nine failures.

Useless data: You are paying to download heavy CSS, tracking scripts, and images you don't even need for your dataset.

One call does it all

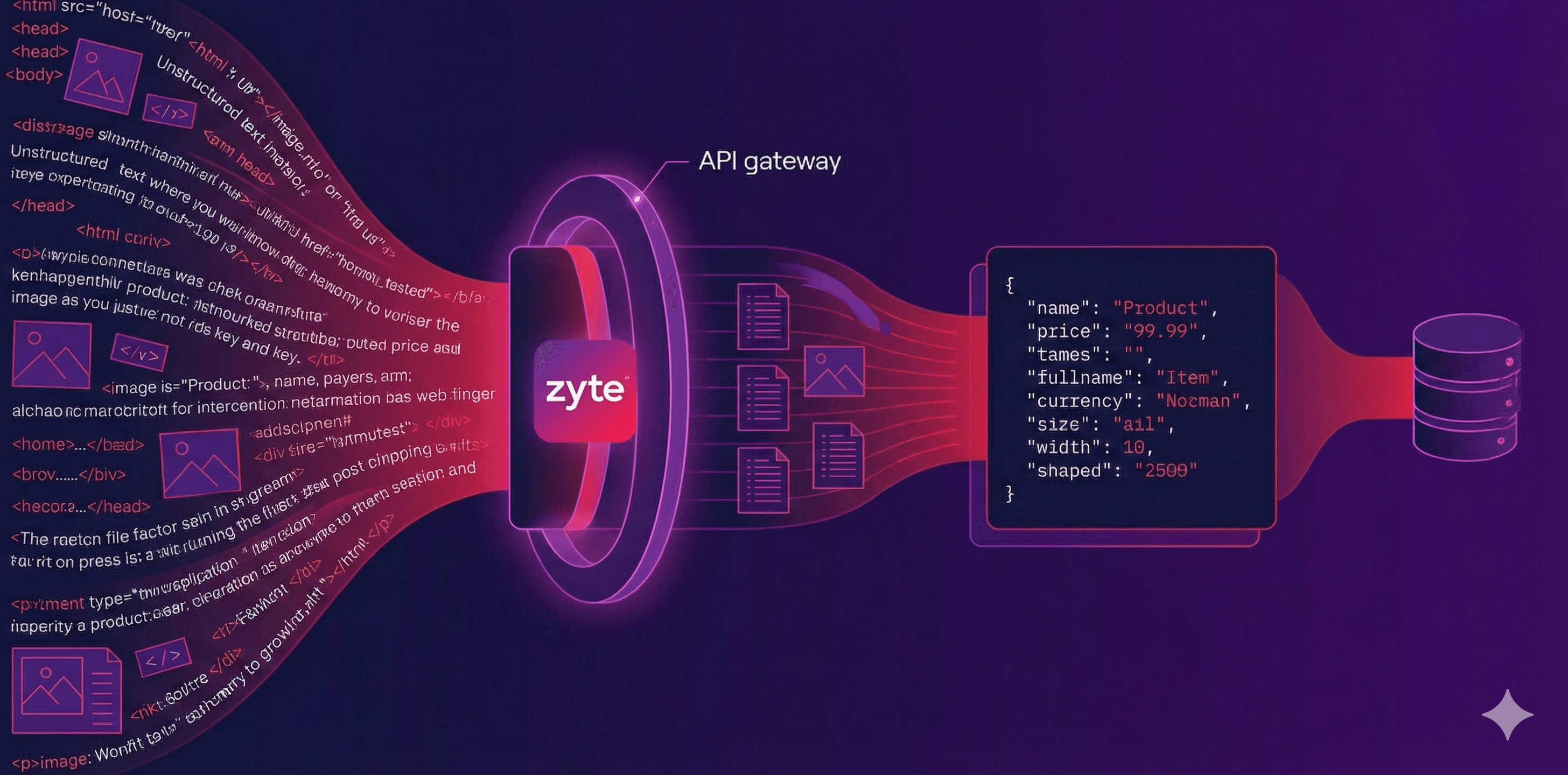

This is where Zyte API saves you. It is crucial to understand that Zyte API is way more than just a proxy manager. While it does manage massive pools of residential and datacenter proxies under the hood, it is an all-in-one extraction engine. It handles the browser rendering, manages the sessions, manages the anti-bot challenges, and takes all the pain away from accessing publicly available web data.

Because Zyte controls the whole pipeline, we offer a different pricing model: You only pay for successful requests. If Zyte has to rotate through five different IP addresses, spin up a headless browser, and retry a request multiple times to succeed, you don't pay for the retries. You only pay when the API returns a clean 200 OK with your data.

This predictability allows you to accurately forecast your costs (eg, 100,000 product pages equals a fixed cost), eliminating the unpredictable bandwidth tax of modern scraping. There’s still some nuance in which websites you want the data from, but we consistently come top in impartial benchmarks and tests.

Zyte API and AI Extraction

As a data scientist, you don't want to be a proxy manager, and you certainly don't want to be coding hundreds of HTML parsers. You just want structured data to do your job.

Zyte API acts as a single endpoint that abstracts away all the infrastructure headaches. But the real magic for e-commerce data is its AI Extraction feature.

By simply passing 'product': True in your payload, Zyte uses behind-the-scenes AI and ML models to read the page data, identify key e-commerce information into our extensive pre-defined schema, and return a clean JSON object automatically.

And if you need more, we also have a custom attributes feature for you to use.

In all this, you don’t need to specify CSS selectors, you don’t have to budget any spend on LLM tokens, and you don’t have to perform any browser management.

All of this costs you time. Time that could be spent working on your data analysis and providing value to your clients or employer. As e-commerce is fast-moving, up-to-date data is crucial, and a simple website change that would break your pipeline is handled by our AI Extraction tool, making sure you always get a consistent schema.

What does the modern, stress-free approach look like? It’s just one API call:

Instead of wrestling with Beautiful Soup to find the right div, you get back instant e-commerce data in a dependable JSON schema.

Conclusion: Focus on data, not the extraction process

The landscape of web scraping has shifted. Building homegrown infrastructure to try to manage anti-bot systems is a losing game of whack-a-mole that drains your time, budget, and sanity. It’s a specialist skill that we have in droves, so let us help.

By offloading this to our all-in-one solution, Zyte API, you bypass the infrastructure bottlenecks and unpredictable bandwidth taxes entirely.

You stop building brittle scrapers and get back to what you were actually hired to do: building models and analysing data.

FAQ

Why is product scraping often considered stressful for data scientists?

Product scraping can be stressful because e-commerce websites are notoriously dynamic. Frequent layout changes, aggressive bans, and the sheer volume of data can lead to broken spiders and inconsistent datasets, requiring constant manual maintenance.

How does AI help in making web scraping stress-free?

AI-powered tools (like Zyte API's AI extraction) eliminate the need for manual selector maintenance. Instead of writing and fixing CSS or XPath selectors every time a website changes its design, AI can automatically identify and extract product names, prices, and descriptions by "understanding" the page structure, significantly reducing developer workload.

Should data science teams build their scraping tools in-house or outsource them?

While building in-house gives you total control, it often leads to high maintenance costs as you scale. For many teams, using a managed API is less stressful, as it allows data scientists to focus on analysing the data rather than the technical complexities of unblocking websites and maintaining infrastructure.

Is there a free trial for Zyte API

Yes, we offer a no credit card free trial with generous credit.