Vizlegal: Rise of machine-readable laws and court judgments

Guest Post by Gavin Sheridan, founder/CEO of Vizlegal.

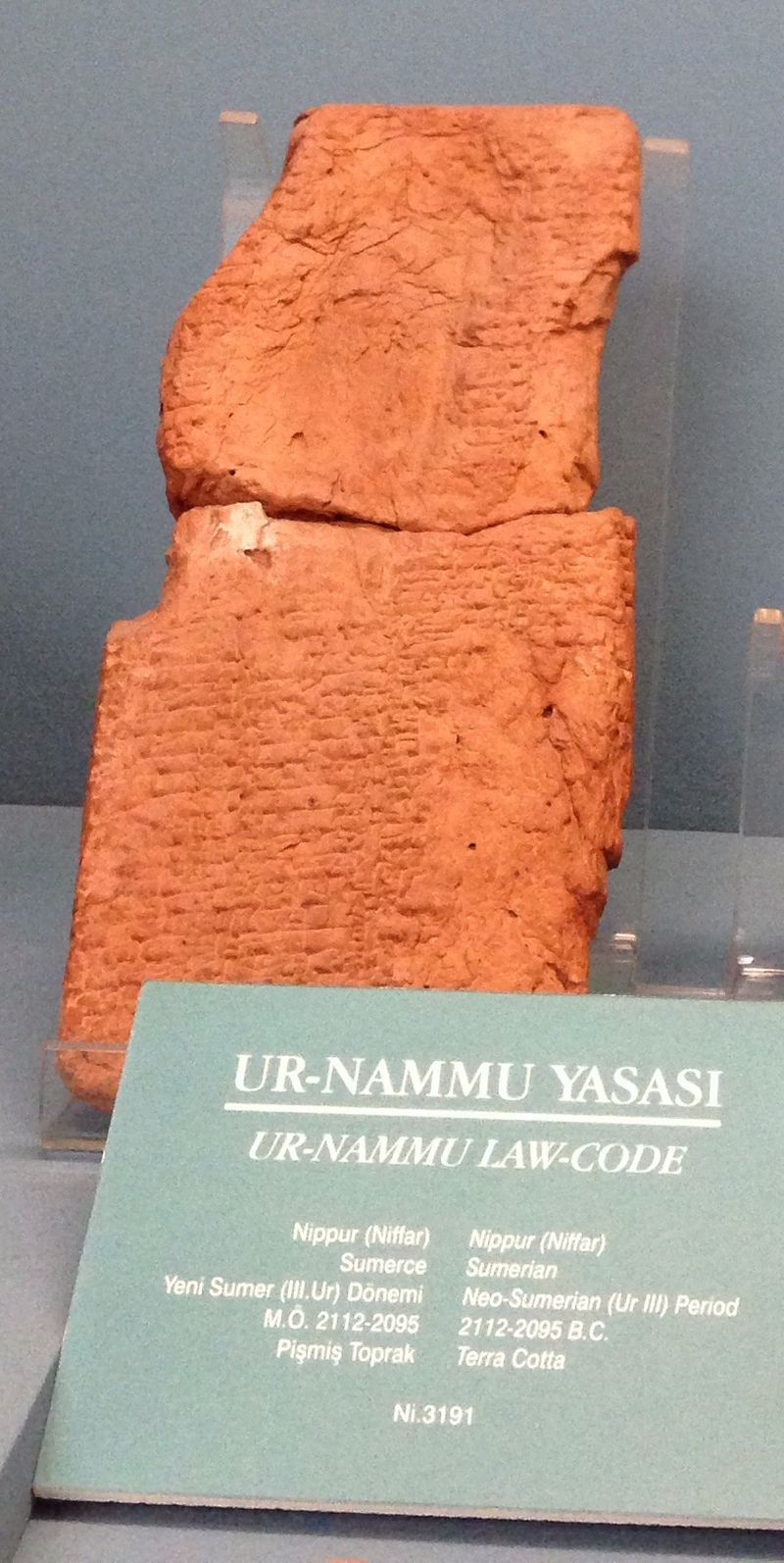

The Code of Ur-Nammu: It sounds like something dreamt up by George Lucas over a boozy lunch. But actually it’s the name of one of the earliest examples of humans writing down laws. The stone tablets of the code were found in modern-day Iraq and date back to more than 4,000 years ago.

"Ur Nammu code Istanbul" by Istanbul Archaeology Museum

In the following millennia, humans continued to write down laws while also keeping a record of the judgments of courts. From stone to wax tablets to paper, civilizations experimented with the best medium to share these laws and judgments. The Romans codified some of their laws - The Twelve Tables - as did the Chinese via the Tang Code.

The leap from stone to paper was a huge milestone. This allowed for laws and judgments to be easily copied and disseminated, later helped along by the wonders of the printing press.

The Paragon Press

As we progressed into the information age, we moved into a far more sophisticated world. Most modern countries now use the internet to disseminate the laws of their parliaments and the rulings of their courts, mostly using one wondrous modern technology: the PDF.

I can hear the techies among you sniggering. But seriously, in 4,000 years we’ve managed to go from writing laws on stone to writing them on PDFs. Some countries are using HTML to publish laws and judgments. Very, very few have built APIs.

This is a problem we’re working on at Vizlegal. We believe that all legal information should be available via APIs. We also believe the Git model should be used for legislation and for the versioning of court judgments. We prefer to live in a world of machine-readable law, where sections of Acts or portions of judgments are accessible not just via search, but via an API call. APIs provide a simple method for applications to access legal information and at present, if an application wants to access a law, there is essentially no way to do it.

Some of you might be wondering: why would we need versioning of court judgments? Aren’t court judgments simply written, published and disseminated and that’s the end of the story?

No.

As The New York Times reported, even the US Supreme Court alters their judgments after their publication and in some cases, years afterwards. Unfortunately they’ve been pretty secretive about it too. In October they announced a new policy of providing slightly more details of their editing, albeit in a technically ad hoc fashion. This doesn’t go far enough - it’s 2016 not 1996. Adding some text at the end of a document to say the document has been edited is something Wikipedia solved 15 years ago.

We should be able to see each stage of the alterations in judgments, with the most up-to-date version being clearly displayed. So too with laws. Additionally If I’m reading a law, I should be able to jump to which judges interpreted it and how (and vice versa). Most constitutions are in place to ensure that justice is administered in public. We believe that laws and judgments must also be as accessible as possible and fully transparent to ensure trust in the democratic system.

And how does Zyte help us with this? Well, as mentioned earlier, most countries in the common law English-speaking world are actively publishing laws, judgments and administrative decisions online like it’s 1999 - HTML and PDFs - with no APIs, no document versioning and little or no connection between laws and the judgments that interpret them. This is bad for the legal industry, bad for legislatures, bad for government, bad for industry, and bad for society. Law is one of the core components of nation states. Minute changes in the wording of laws and obscure interpretations actively affect the lives of billions of people each and every day.

Many government-built websites that publish court judgments are available. Unfortunately, they are usually poorly designed with little or no thought given to the users who must access them every day. They often have non-existent or sloppily implemented user interfaces. This usually goes with not providing an open data source - it’s basically “publish some HTML and move on”.

Zyte provides the most comprehensive services for gathering this raw unstructured information into our API, which serves our web application and which in turn serves our customers. Zyte grabs this information and converts it into data. We then use this data to build our own platform and analytics.

Our goal is to take the legal field from an information-rich industry to a data-rich one. Zyte’s essential package of tools buttress our mission - and it is a “mission” because the information sources are so vast and varied that it would end up consuming enormous time and resources if we did it by ourselves.

We believe in making laws and judgments machine-readable because we can’t imagine a future of humanity that hasn’t done this (and we also believe in a future of law-abiding robots).

_HFpro5d6k3.png&w=256&q=75)

_E4PyVpfAxa.png&w=256&q=75)

-(1).png&w=1920&q=75)

-(1)_VZGHqxCgXV.png&w=1920&q=75)