Introducing data reviews

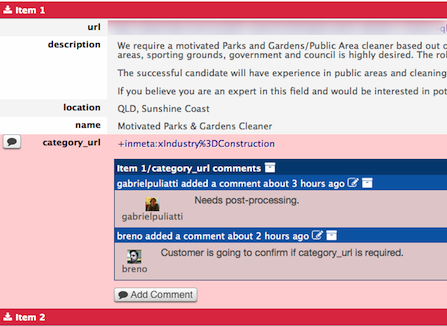

One of the things that takes more time when building a spider is reviewing the scraped data and making sure it conforms to the requirements and expectations of your client or team. This process is so time consuming that, in many cases, it ends up taking more time than writing the spider code itself, depending on how well the requirements are written. To make this process more efficient we have introduced the ability to comment data directly on Dash (Zyte UI), right next to the data, instead of relying on other channels (like issue trackers, emails or chat).

With this new feature you can discuss problems with data right where they appear without having to copy/paste data around, and have a conversation with your client or team until the issue is resolved. This reduces the time spent on data QA, making the whole process more productive and rewarding.

So go ahead, start adding comments to your data (you can comment whole items or individual fields) and let the conversation flow around data! You can mark resolved issues by archiving comments, and you will see jobs with unresolved (unarchived) comments directly on the Jobs Dashboard.

Last, but not least, you have the Data Reviews API to insert comments programmatically. This is useful, for example, to report problems in post-processing scripts that analyze the scraped data.

Happy scraping!

_HFpro5d6k3.png&w=256&q=75)

_E4PyVpfAxa.png&w=256&q=75)

-(1).png&w=1920&q=75)

-(1)_VZGHqxCgXV.png&w=1920&q=75)